Bayes estimator

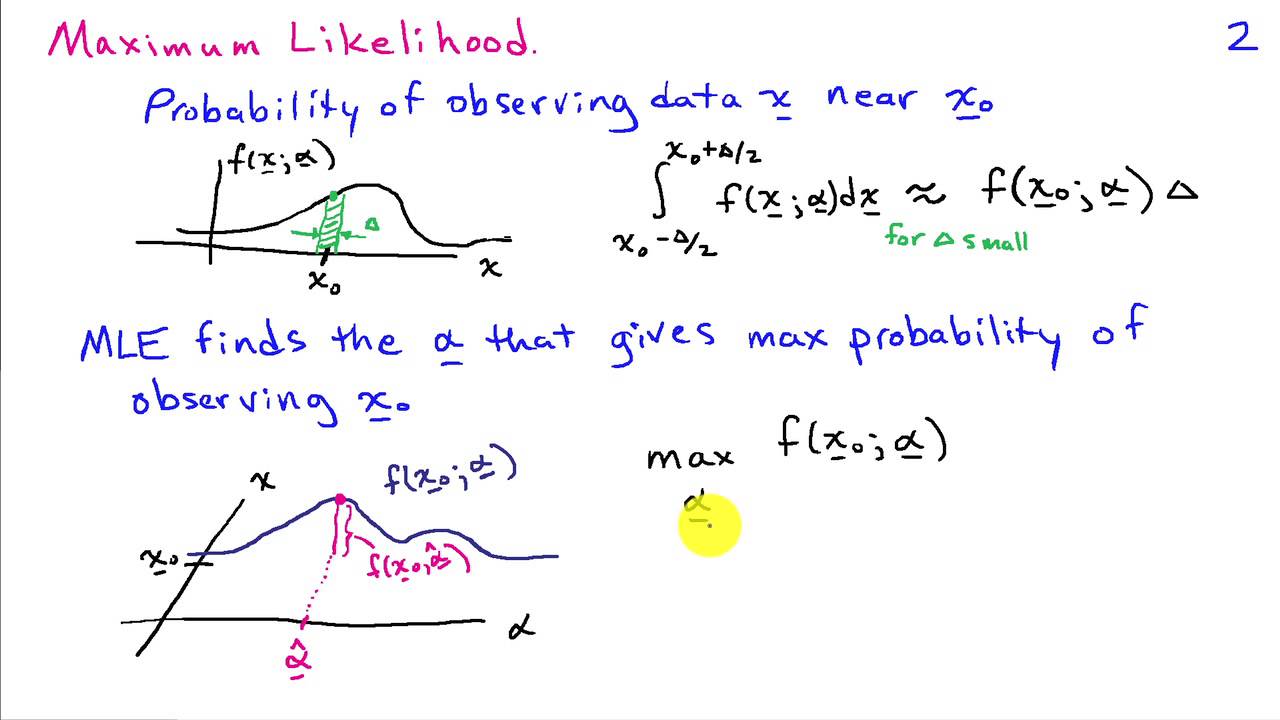

A Bayesian estimator ( named after Thomas Bayes ) is in mathematical statistics an estimator that takes into account, in addition to the observed data, if present, prior knowledge of a parameters to be estimated. According to the procedure of Bayesian statistics knowledge this is modeled by a distribution of the parameter, of the a priori distribution. By Bayes' Theorem gives the conditional distribution of the parameter under the observation data, the a posteriori distribution. To obtain a clear estimate therefrom are capable extent the posterior distribution as the expected value, mode or median is used as a so-called Bayesian estimator. Since the a posteriori expected value of the principal and in practice most commonly used estimator is, some authors refer to this as the Bayes estimator. In general we define a Bayesian estimator as the value which minimizes the expected value of a loss function under the a-posteriori distribution. For a quadratic loss function, then the a posteriori expectation value is just used as an estimate.

Definition

It indicate the parameters to be estimated and the likelihood, that the distribution of observation as a function of. The a priori distribution of the parameter to be identified by. Then

The a posteriori distribution. It is further a function, called loss function, given the values of model the loss that you suffer if estimated from through. It means a value that the expected value of the

Minimizing the loss of the posterior distribution, a Bayesian estimator of. In the case of a discrete distribution of the integrals are to be understood about as summation over.

Special cases

Posterior expectation value

An important and frequently used loss function is the quadratic deviation

With this loss function is obtained as the Bayes estimator of the expected value of the posterior distribution, short of a posteriori expected value

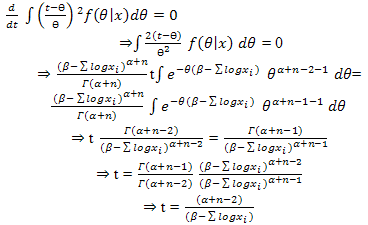

This can be seen in the following way: Differentiating by, resulting

Zeroing this derivative and solving for yields above formula.

A posteriori median

Another important Bayesian estimator is the median of the a posteriori distribution. It results when using the linear loss function

The magnitude of the absolute error. Case of a continuous posterior distribution, the corresponding Bayes estimator obtained as a solution of the equation

So as the median of the distribution with density.

A posteriori mode

For discrete distributed parameter there is the zero-one loss function

At which assigns a constant loss all wrong estimates and not "punished" only an exact estimate. As expected value of this loss function gives the a posteriori probability of the event, ie. This is minimized at the locations at which is at its maximum, that is, the modal values of the a posteriori distribution.

With constantly has distributed the event for all probability zero. In this case can be given instead of a (small ), the loss function

Consider. In the limit then the a posteriori mode is also apparent as a Bayesian estimator.

In the case of a uniform distribution as the a priori distribution, there is the maximum likelihood estimator, which thus represents a special case of a Bayesian estimator.

Example

An urn containing red and black balls in an unknown composition, that is, the probability of drawing a red ball is unknown. In order to appreciate, balls are drawn successively with replacement: Only one draw delivers a red ball, so it will be observed. The number of red balls drawn with a binomial distribution and, therefore applies

Da on the parameters to be estimated is present no information, the DC distribution is used as a priori distribution, that is to. As an a posteriori distribution therefore is the

This is the density of a beta distribution with parameters and. So, as a posterior expectation and as a posteriori mode. The posterior median must be determined numerically and yields about. General results from the red balls in contractions as a posteriori expected value and so the classical maximum likelihood estimator, as a posteriori mode. For small values of not a good approximation of the posterior median.

Practical calculation

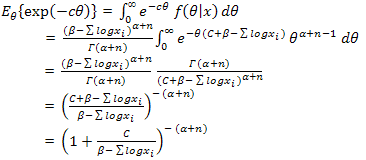

One obstacle in the application of Bayesian estimators can be their numerical calculation. A classical approach is the use of so-called conjugated a-priori distributions in which a posterior distribution from a known distribution class is determined, the position parameters can then be easily looked up in a table. If, for example, in the above urn experiment any beta distribution as prior, then there is also a beta distribution as a posteriori distribution.

For general a priori distributions shows the above formula the a posteriori expectation that its calculation of two integrals must be determined over the parameter space. A classical method of approximation is the Laplace approximation in which the integrand to be written as an exponential function, and then the exponent be approximated by a quadratic Taylor approximation.

With the advent of more powerful computers, numerical methods have to calculate the integrals applicable (see Numerical integration). A problem is especially high-dimensional sets of parameters represent, ie the case that a lot of parameters to be estimated from the data. This often Monte Carlo methods are approximate methods are used.